A/B Testing Your Emails: What to Test and Why It Matters

Email marketing is one of the most powerful tools in your digital strategy. But how do you know if your emails are working?

That’s where A/B testing (also known as split testing) comes in.

If you’re not A/B testing your emails, you’re guessing. And in 2025’s competitive inbox, guesswork won’t get you clicks, opens, or conversions. In this post, you’ll learn what A/B testing is, what elements you should be testing, and how to use the results to improve your email marketing performance.

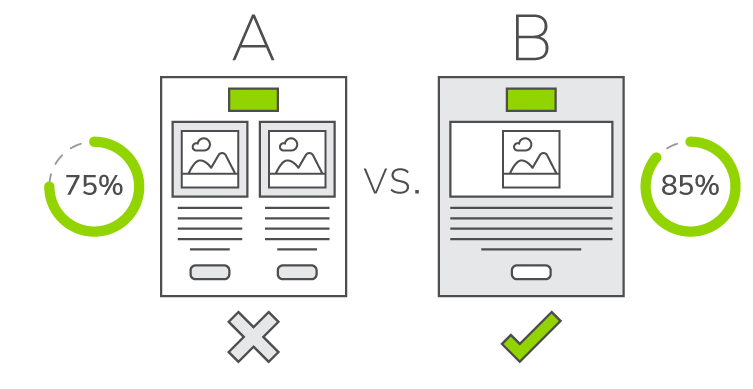

✅ What Is A/B Testing in Email Marketing?

A/B testing involves sending two different versions of an email to a small percentage of your list to see which one performs better. Once you determine the “winner,” the best-performing version gets sent to the rest of your audience.

Think of it as running a mini-experiment to discover what resonates with your subscribers.

Why A/B Testing Matters

-

Improve open rates, click-throughs, and conversions

-

Understand your audience’s preferences

-

Make data-driven decisions

-

Avoid email fatigue and increase engagement

-

Optimize subject lines, content, and layout without guessing

The best part? You don’t need to overhaul your strategy—just tweak one element at a time.

What You Can (and Should) Evaluate in Your Emails

Let’s break down the elements you can A/B evaluate and why each one matters.

1. Subject Line

Why it matters: It’s the first thing people see and determines whether they open the email at all.

What to test:

-

Short vs. long subject lines

-

Question vs. statement

-

Use of emojis

-

Personalization (e.g., first name)

-

Urgency or curiosity-driven language

Example:

-

A: “New Templates Inside!”

-

B: Boost Your Content with These Free Templates”

2. Preheader Text

Why it matters: The preheader acts like a second subject line. It gives your reader more reason to open.

What to evaluate:

-

Teasing the content vs. summarizing it

-

Adding a CTA

-

Asking a question

-

Using personalization

Example:

-

A: “Click to see what’s new”

-

B: “Can this one tool save you 5 hours a week?”

3. Email Copy

Why it matters: The right tone and message can boost engagement and conversions.

What to evaluate:

-

Short vs. long copy

-

Conversational vs. formal tone

-

Listicle format vs. paragraph format

-

Story-driven intro vs. direct approach

-

Including first-person voice (“I” or “we”)

Example:

-

A: Short, punchy copy

-

B: Story format with a personal touch

4. CTA (Call to Action)

Why it matters: Your CTA is what gets people to take action, whether it’s to read more, sign up, or buy.

What to evaluate:

-

Button vs. text link

-

CTA placement (top, middle, bottom)

-

CTA wording (e.g., “Get the Guide” vs. “Download Now”)

-

Number of CTAs per email

Example:

-

A: “Read the Full Blog Post”

-

B: “Start Learning Now”

5. Images vs. No Images

Why it matters: Visuals can enhance or distract depending on your audience and message.

What to evaluate:

-

Including product or lifestyle images

-

Plain-text vs. graphic-heavy layout

-

Infographic vs. list format

-

Hero image vs. none

Pro Tip: Always include alt text for accessibility and deliverability.

6. Send Time & Day

Why it matters: The same email can perform drastically differently depending on when it’s sent.

What to evaluate:

-

Morning vs. afternoon

-

Weekday vs. weekend

-

Tuesday vs. Thursday (industry standard vs. experimentation)

Example:

-

A: Sent at 10 AM on Tuesday

-

B: Sent at 3 PM on Thursday

7. Sender Name

Why it matters: People decide whether to open based on who the email is from.

What to evaluate:

-

Brand name vs. personal name

-

“Jane at Company” vs. just “Company Name”

Example:

-

A: From “Ayomi at SmartBrand”

-

B: From “SmartBrand Marketing”

How to Run an A/B Test (Step by Step)

-

Pick One Variable to evaluate

→ Never test multiple things at once, or you won’t know what worked. -

Segment Your List

→ Split 10–20% of your list into two equal groups (A and B). -

Set Your Goal Metric

→ Is your goal more opens, more clicks, or more purchases? -

Send and Wait

→ Give it time to collect enough data—usually 2–4 hours for open rate tests. -

Analyze the Results

→ Pick the winner and send it to the remaining 80–90% of your list. -

Document Your Learnings

→ Use the results to guide future emails.

Pro Tips for Better A/B Testing

-

Always test one variable at a time

-

Repeat winning tests periodically, audience preferences change

-

Keep a record of what’s worked in a “what we’ve learned” doc

-

Trust the data, not assumptions or personal preferences

✅ Final Thoughts

A/B testing your emails is one of the simplest ways to improve performance and increase ROI, without changing your entire strategy.

Whether you’re trying to improve open rates or get more clicks, testing even the smallest element can lead to better engagement, more conversions, and smarter marketing.